Introduction

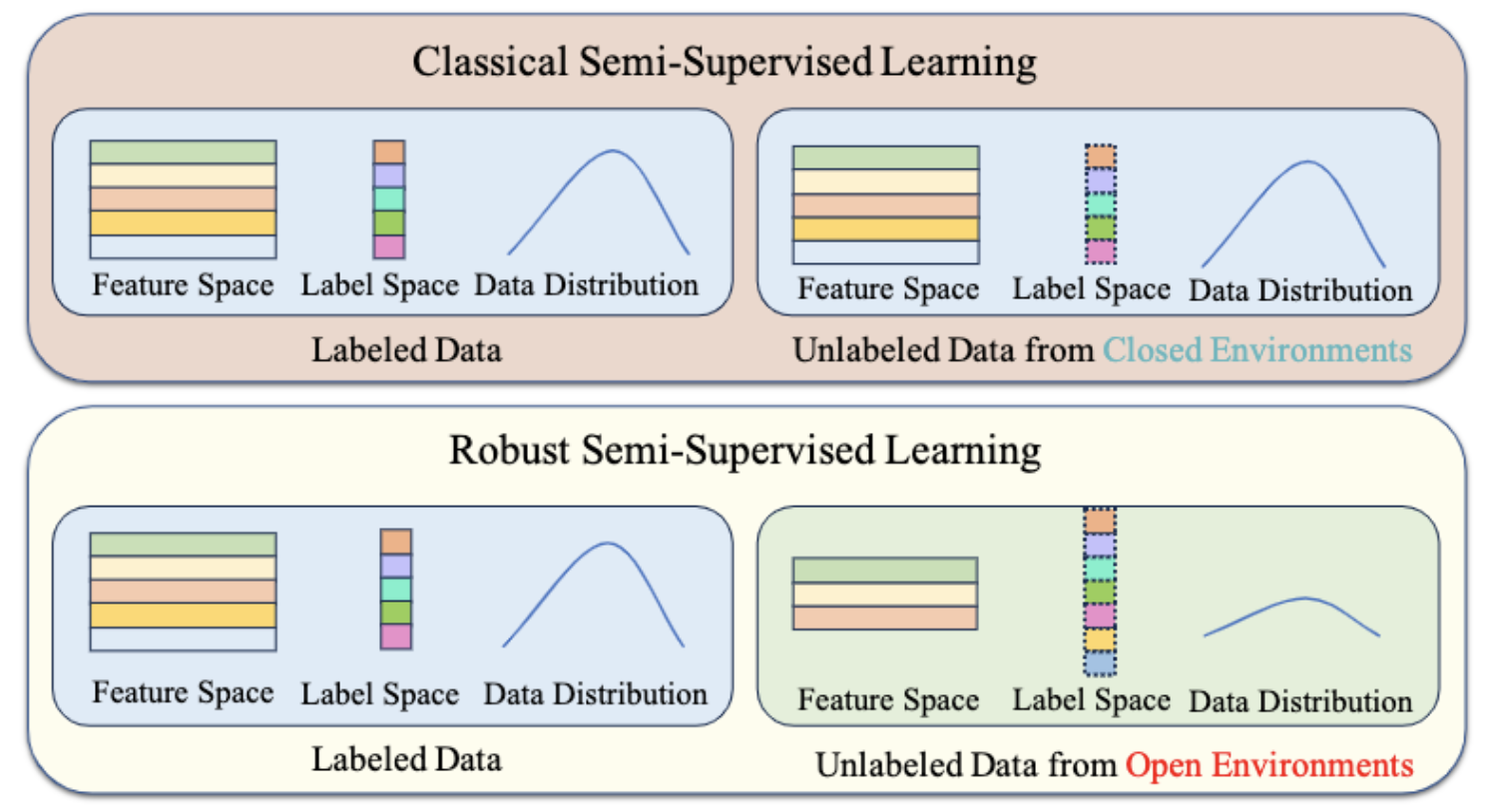

Classical Semi-Supervised Learning (SSL) algorithms usually only perform well when all samples come from the same distribution. To apply SSL techniques to wider applications, there is an urgent need to study robust SSL methods that do not suffer severe performance degradation when unlabeled data are inconsistent with labeled data. However, research on robust SSL is still not mature enough and in confusing. Previous research on robust SSL has approached the problem from a static perspective, thereby conflating local adaptability with global robustness from a static perspective.

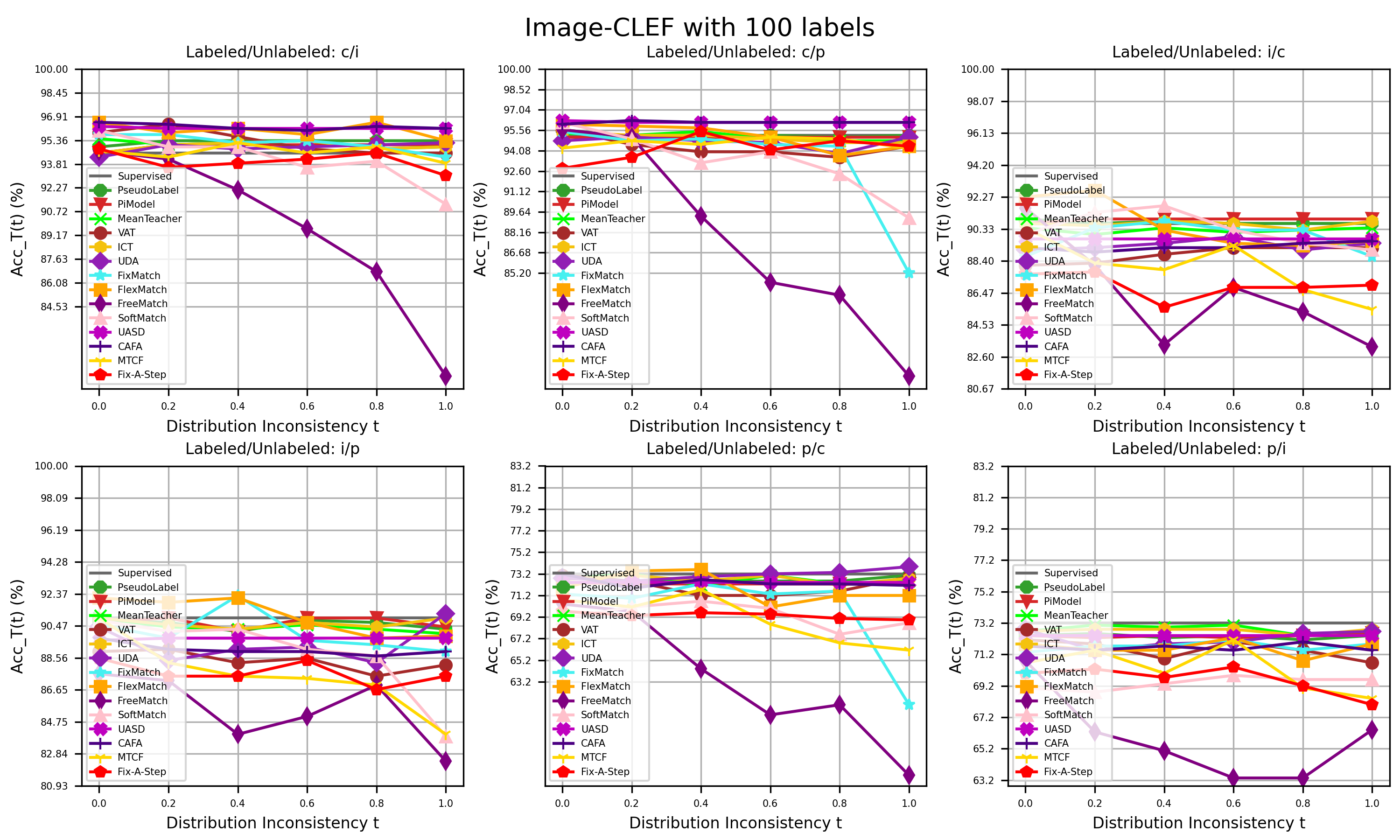

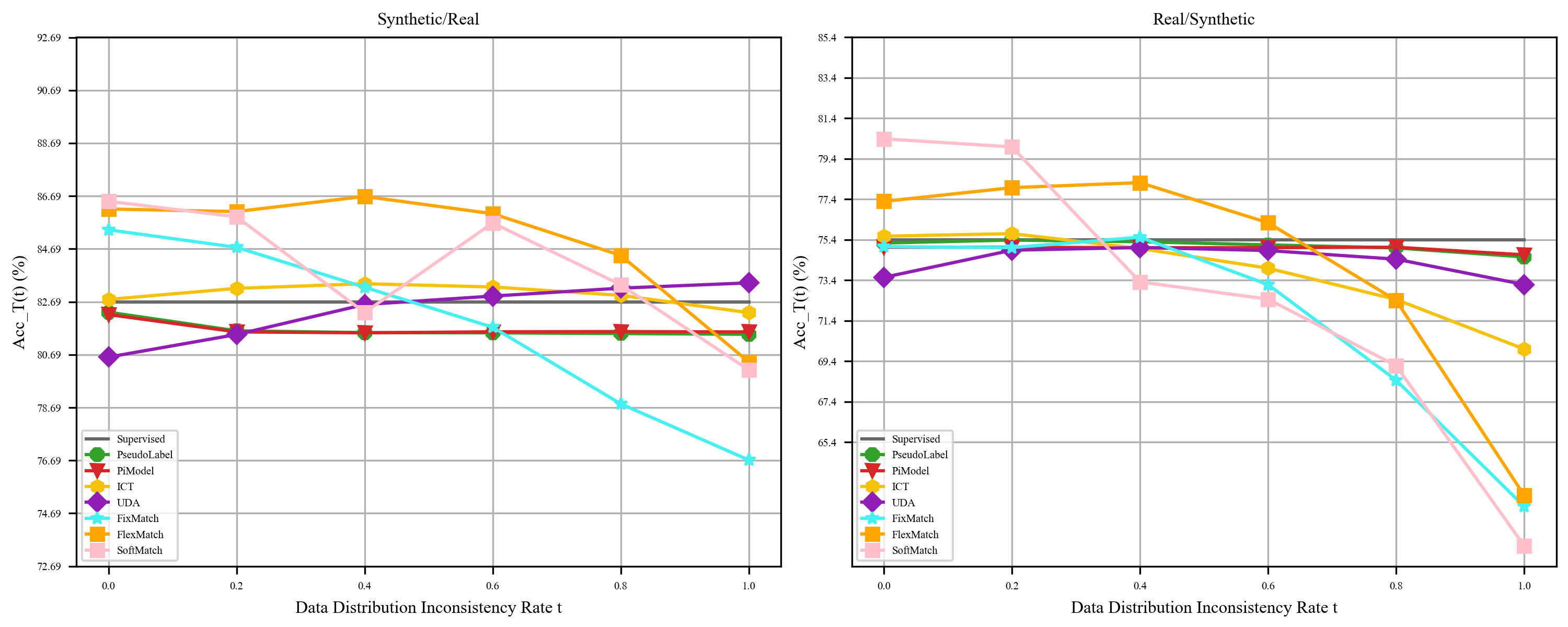

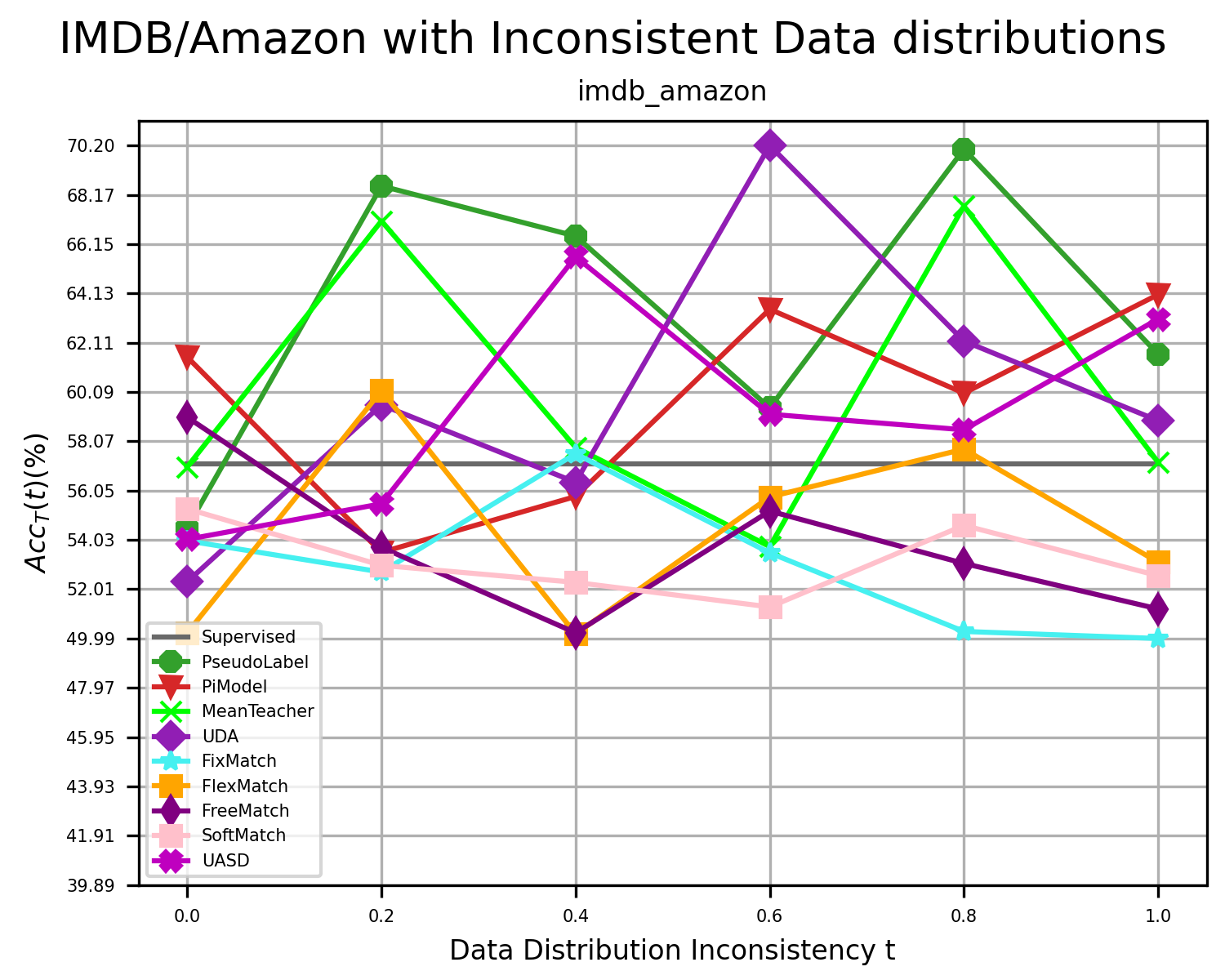

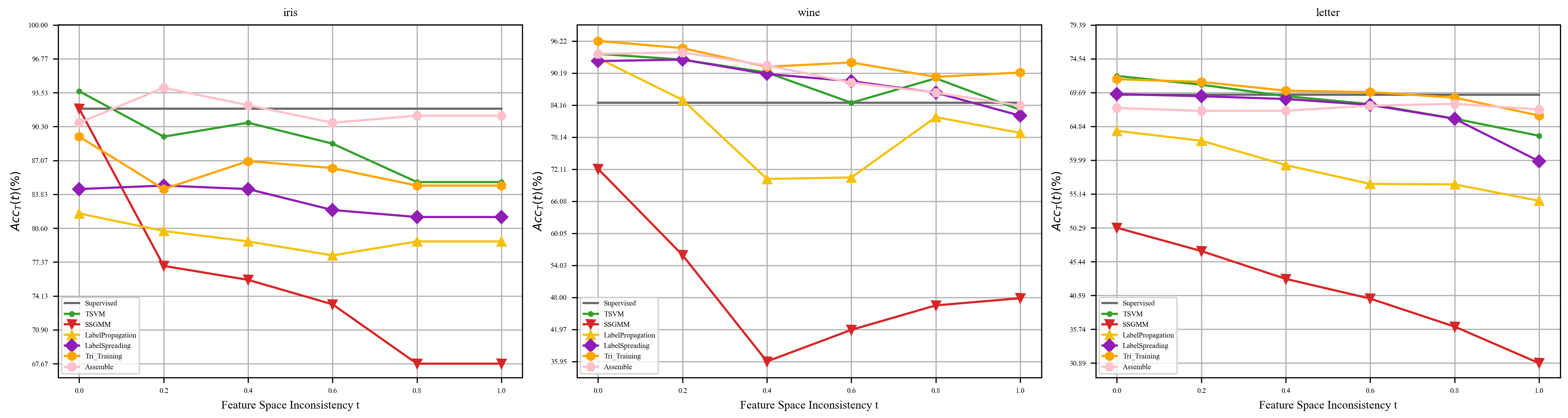

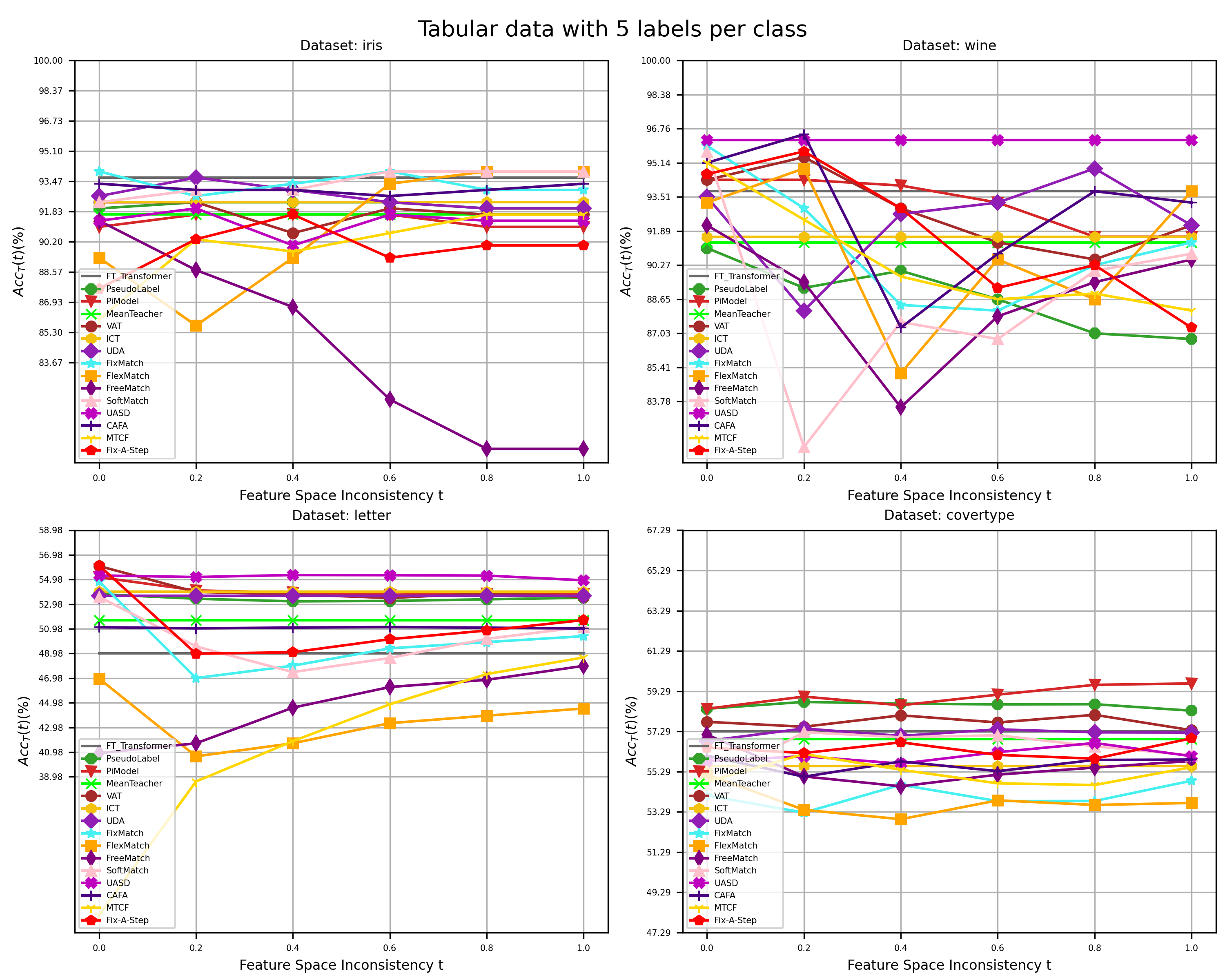

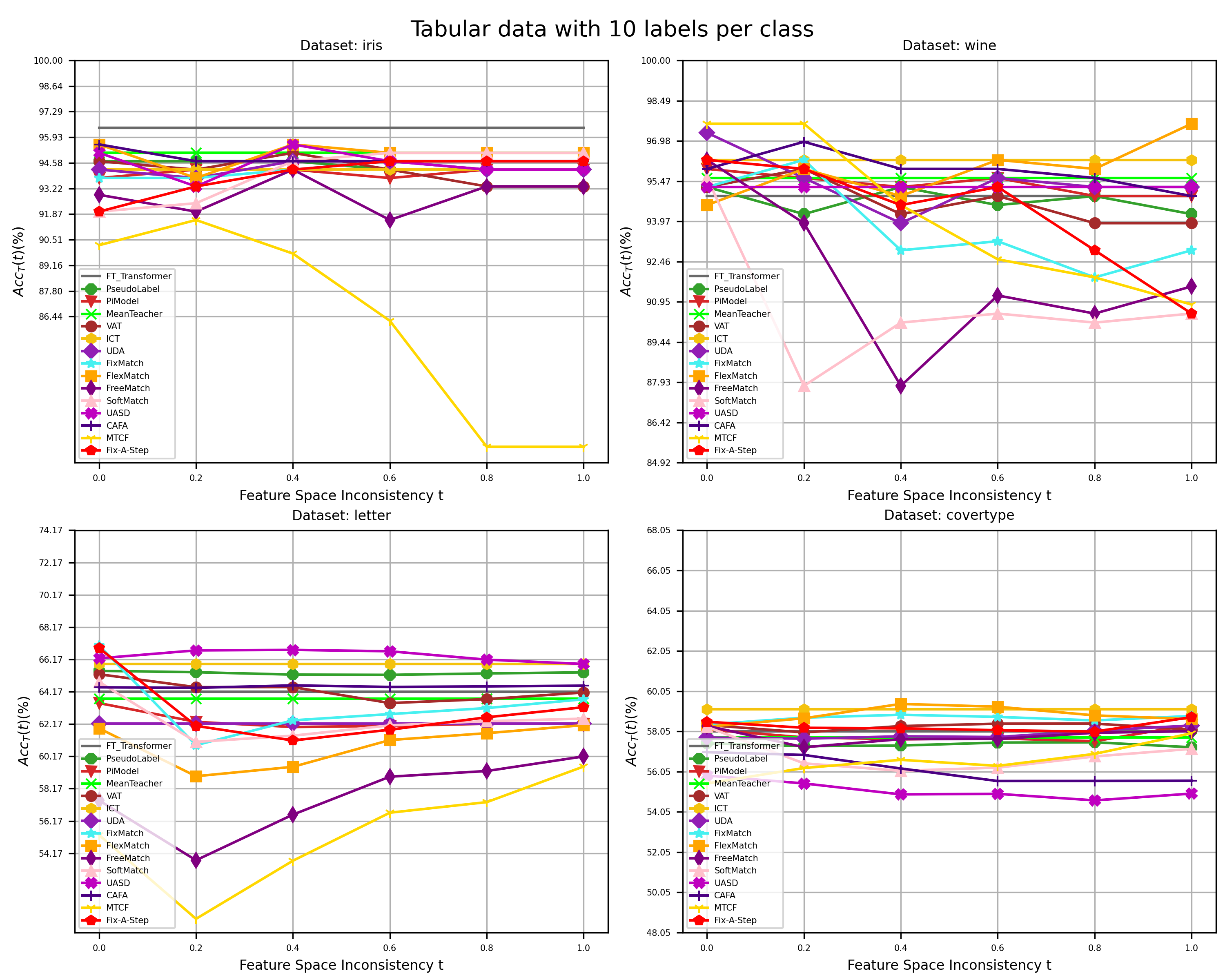

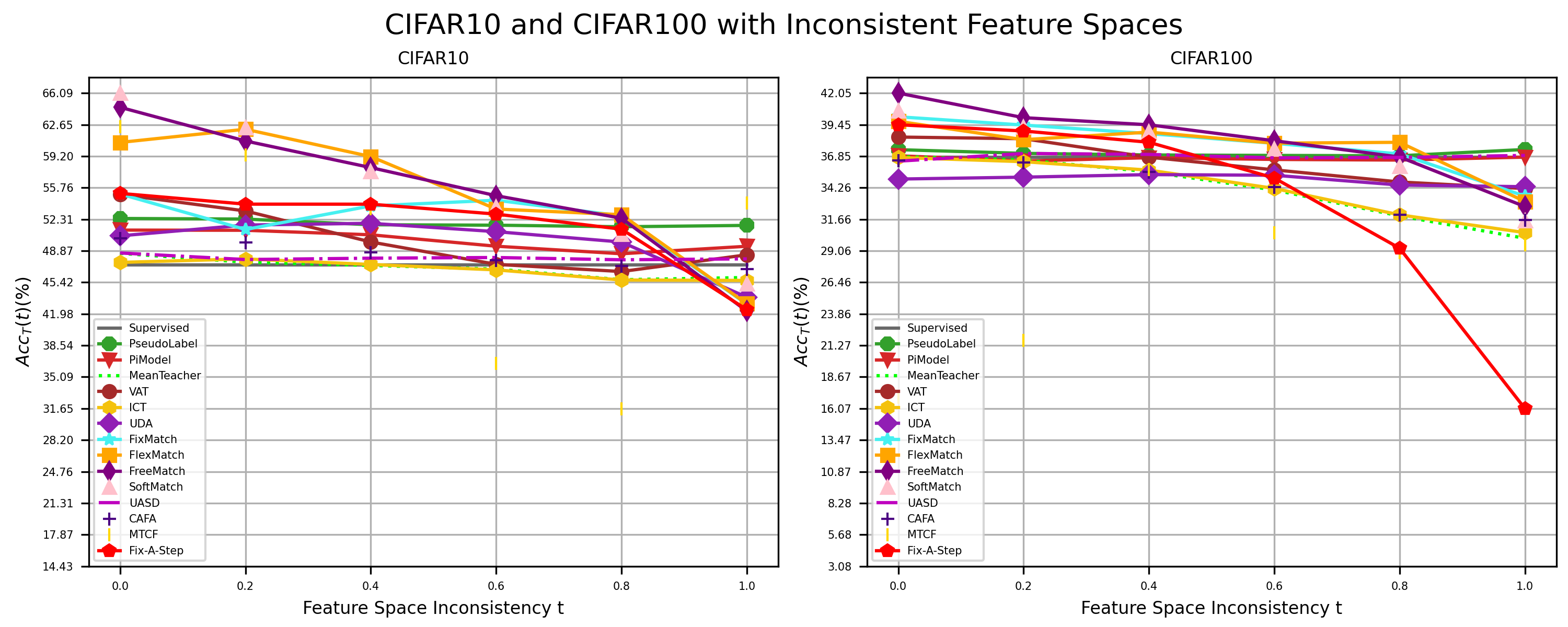

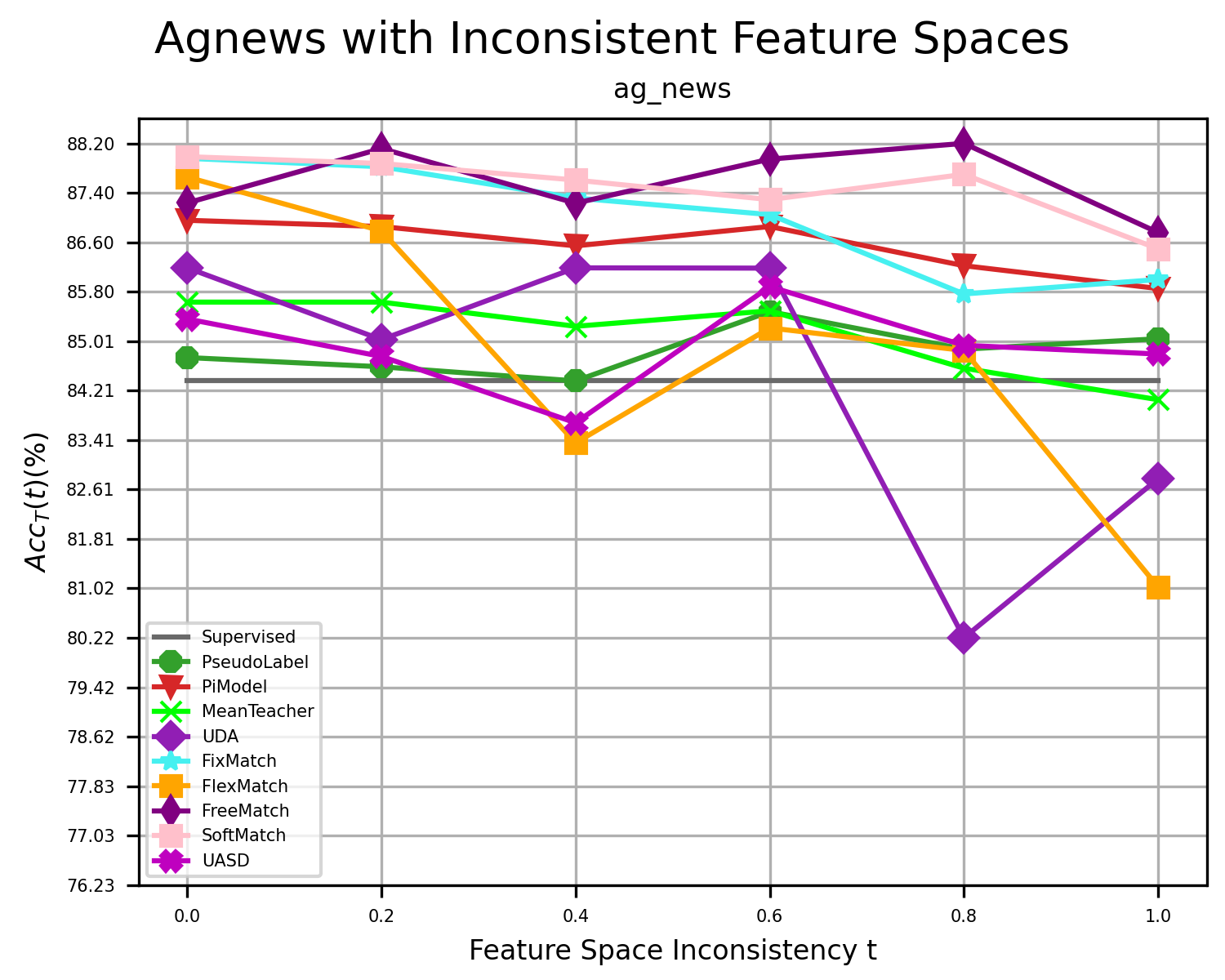

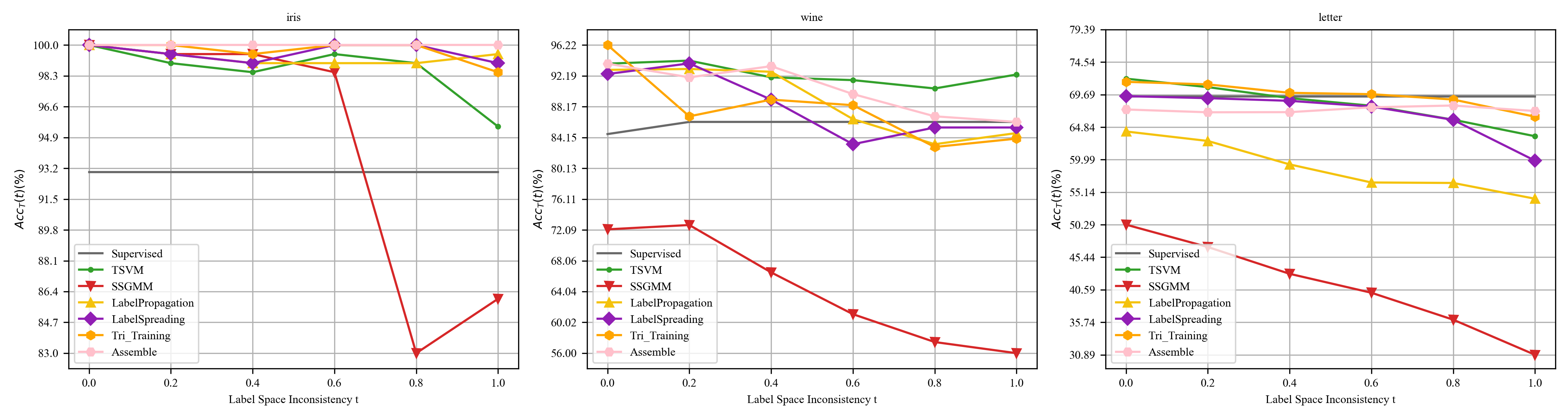

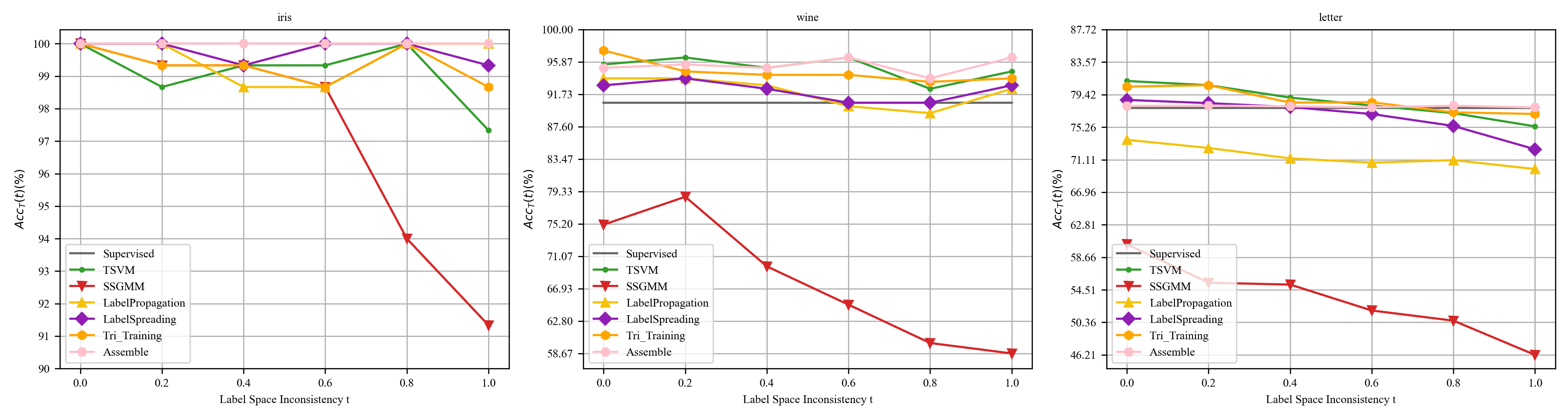

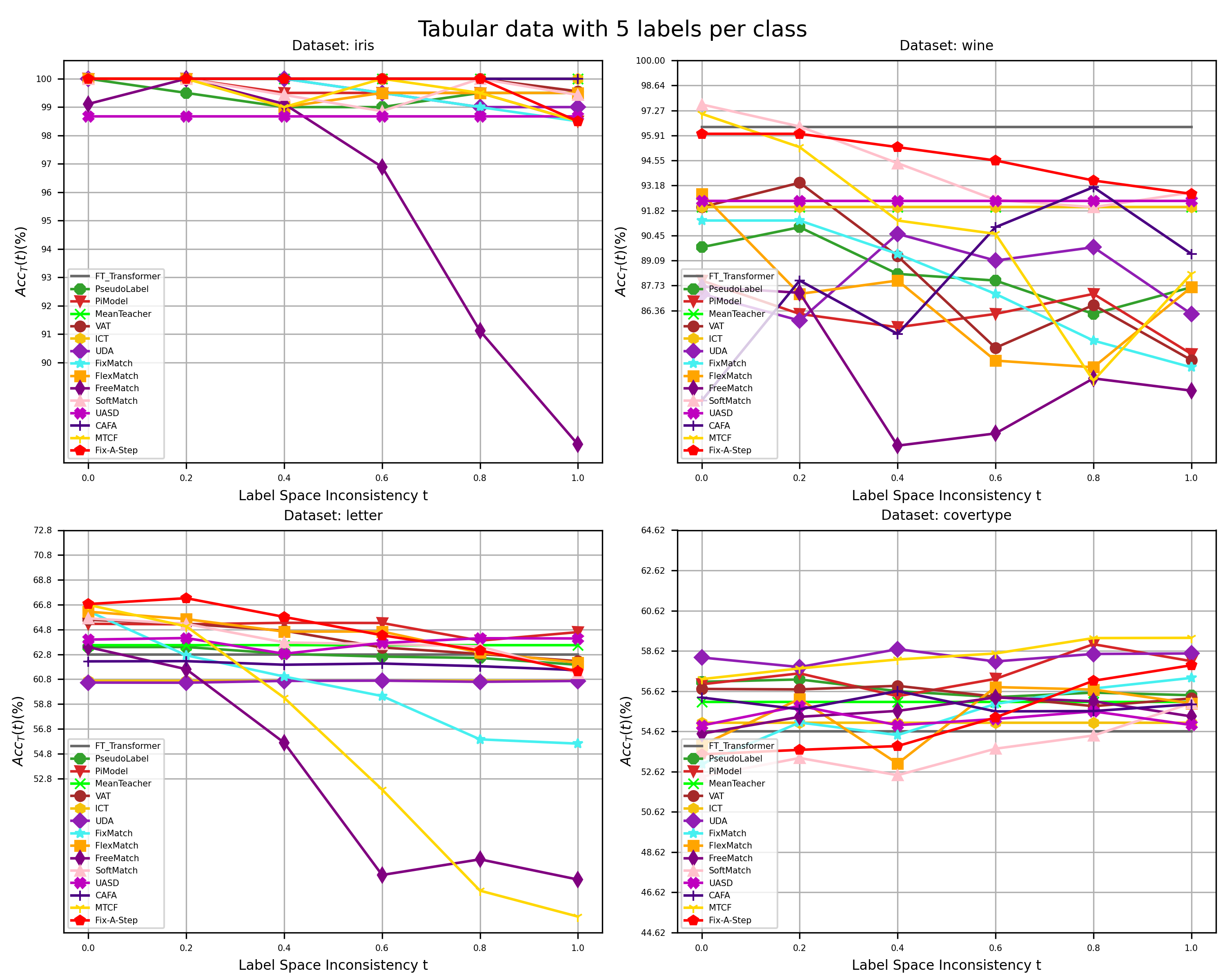

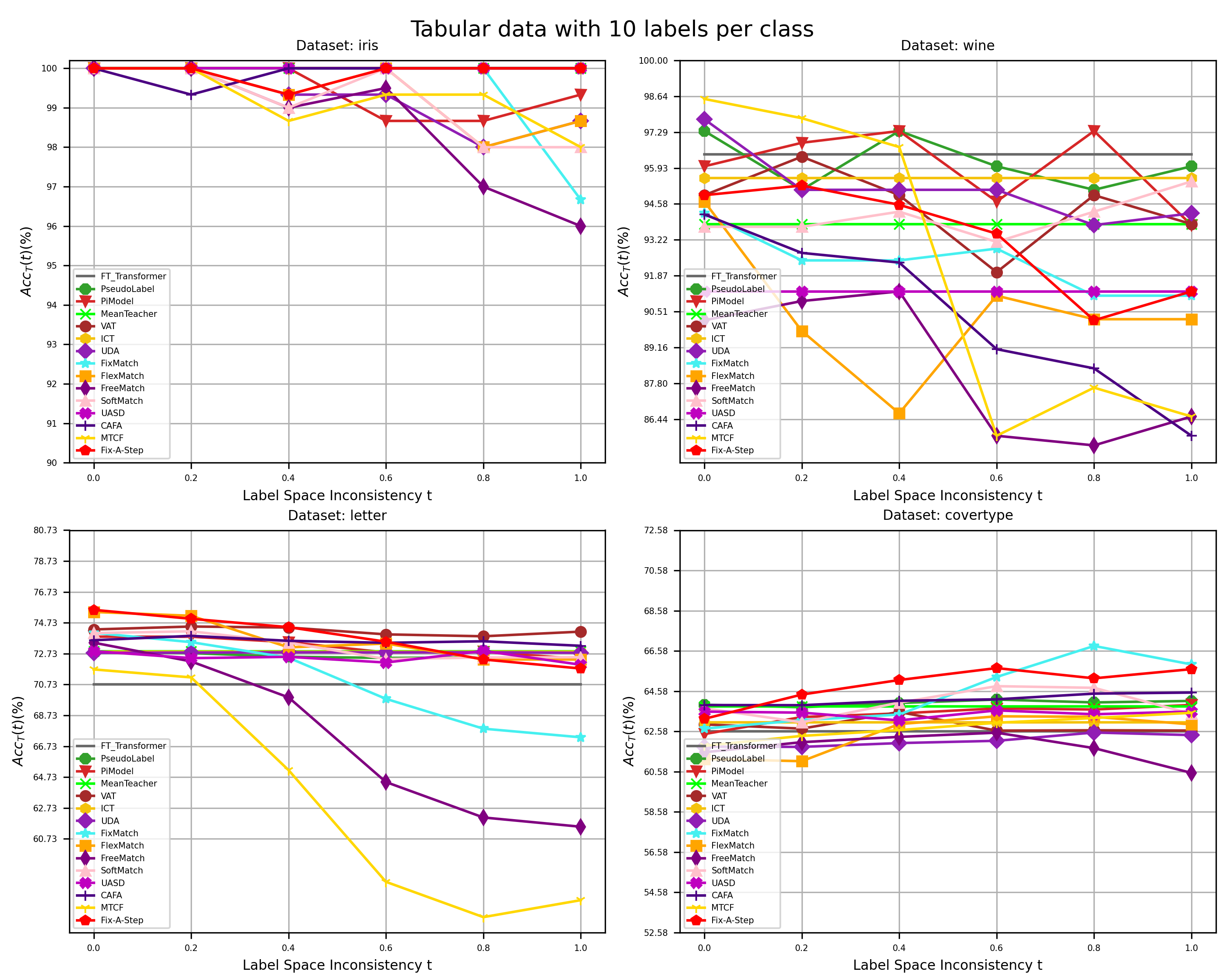

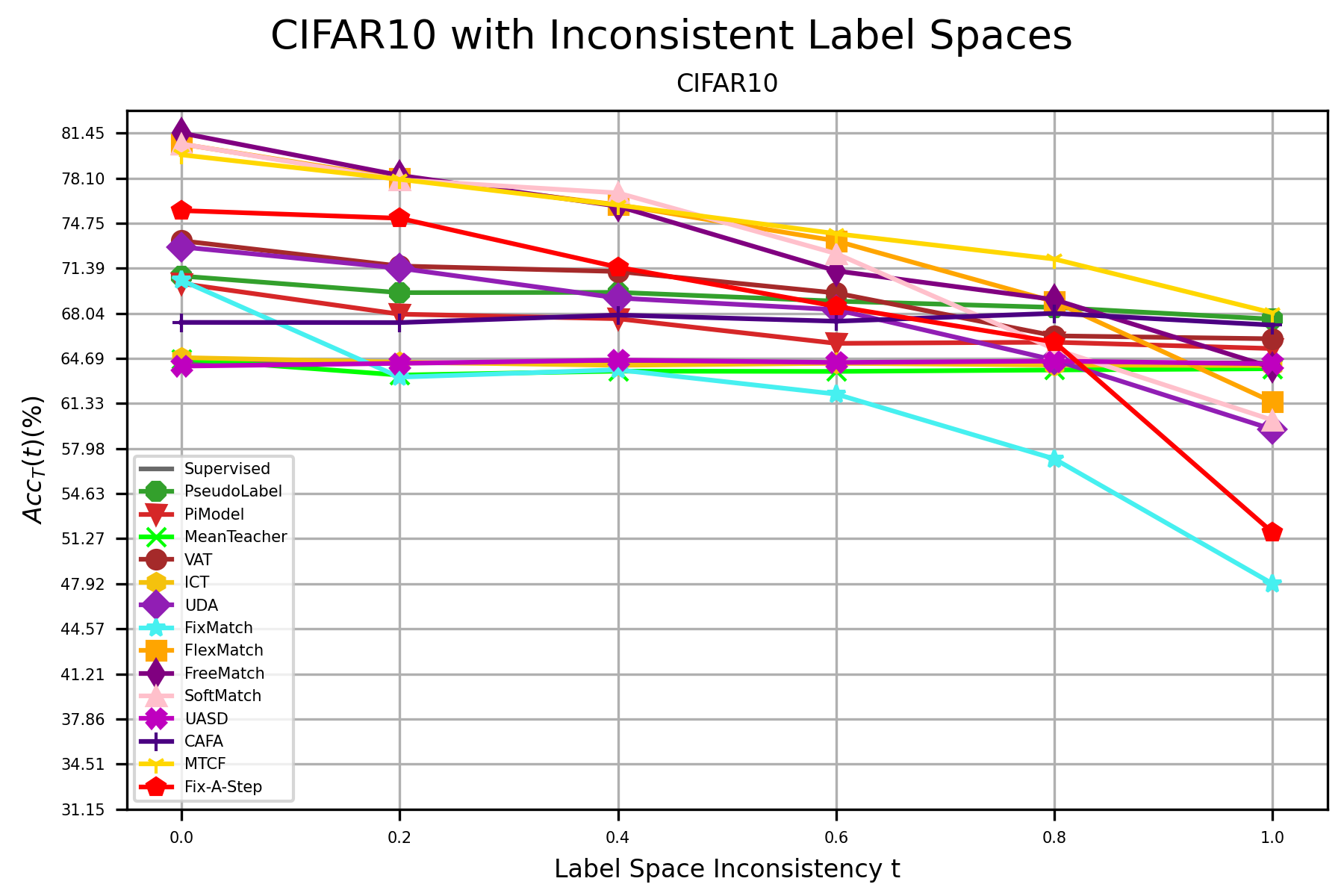

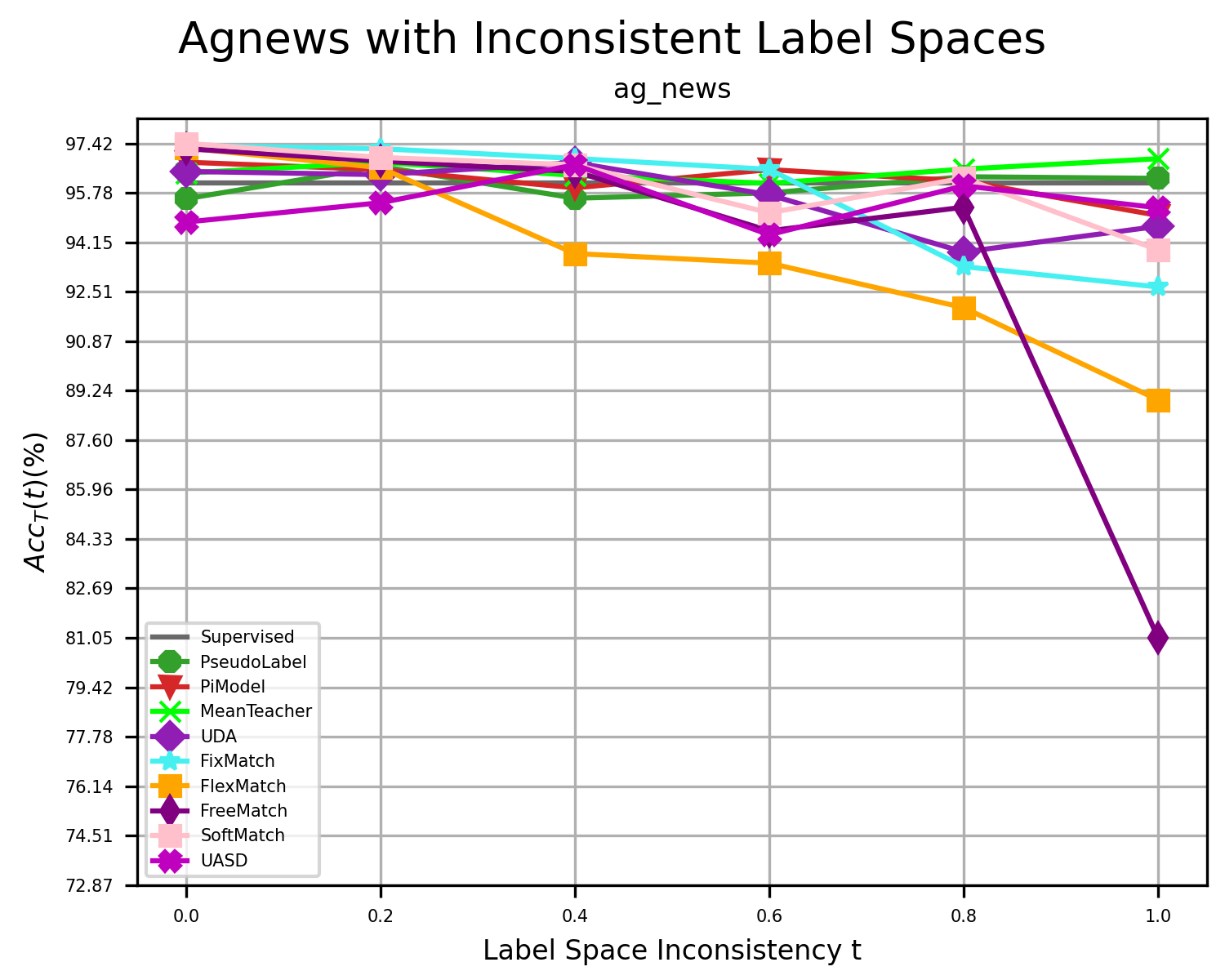

We have corrected the misconceptions in previous research on robust SSL and reshaped the research framework of robust SSL by introducing new analytical methods and associated evaluation metrics from a dynamic perspective. We build a benchmark that encompasses three types of open environments: inconsistent data distributions, inconsistent label spaces, and inconsistent feature spaces to assess the performance of widely used statistical and deep SSL algorithms with tabular, image, and text datasets.

This benchmark is open and continuously updated. To avoid unnecessary disputes, please understand that due to limited computational resources, the current evaluation scope is limited and cannot fully represent the performance of SSL algorithms in real-world applications. We welcome everyone to contribute additional experimental setups, codes, and results to improve this benchmark.

Analytical Method

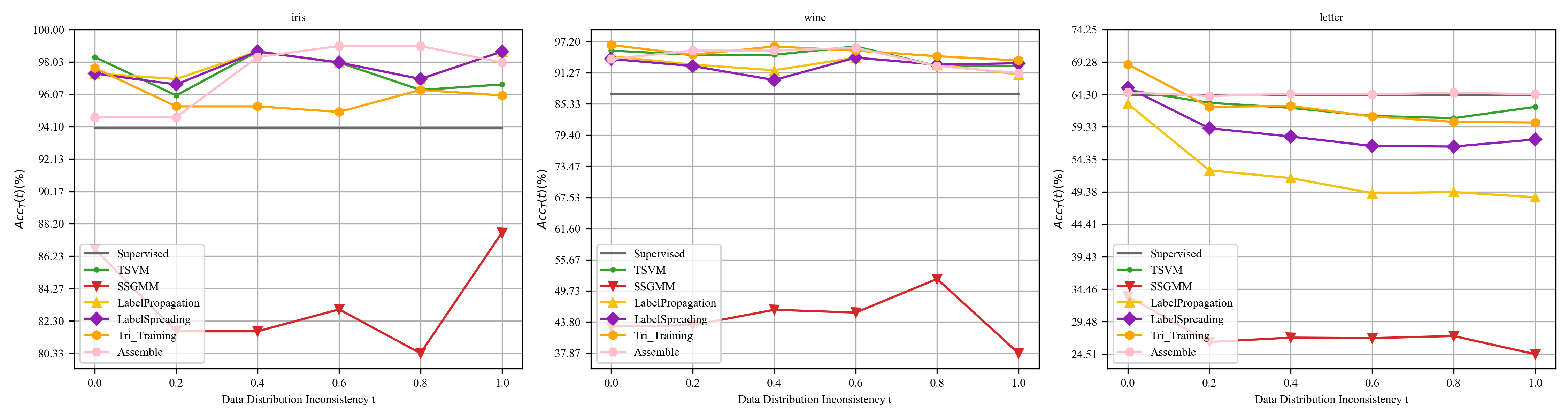

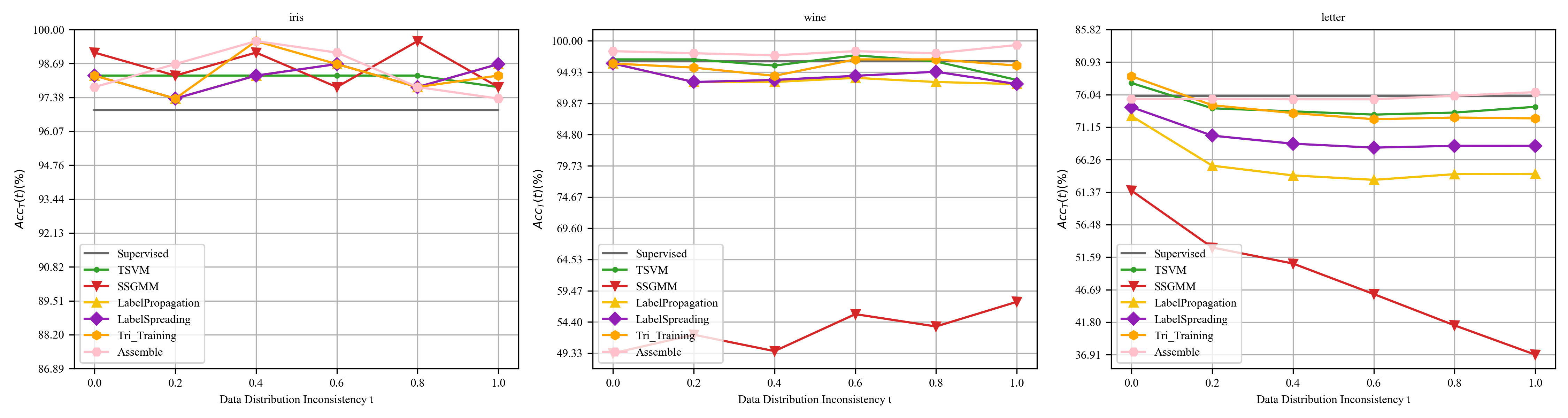

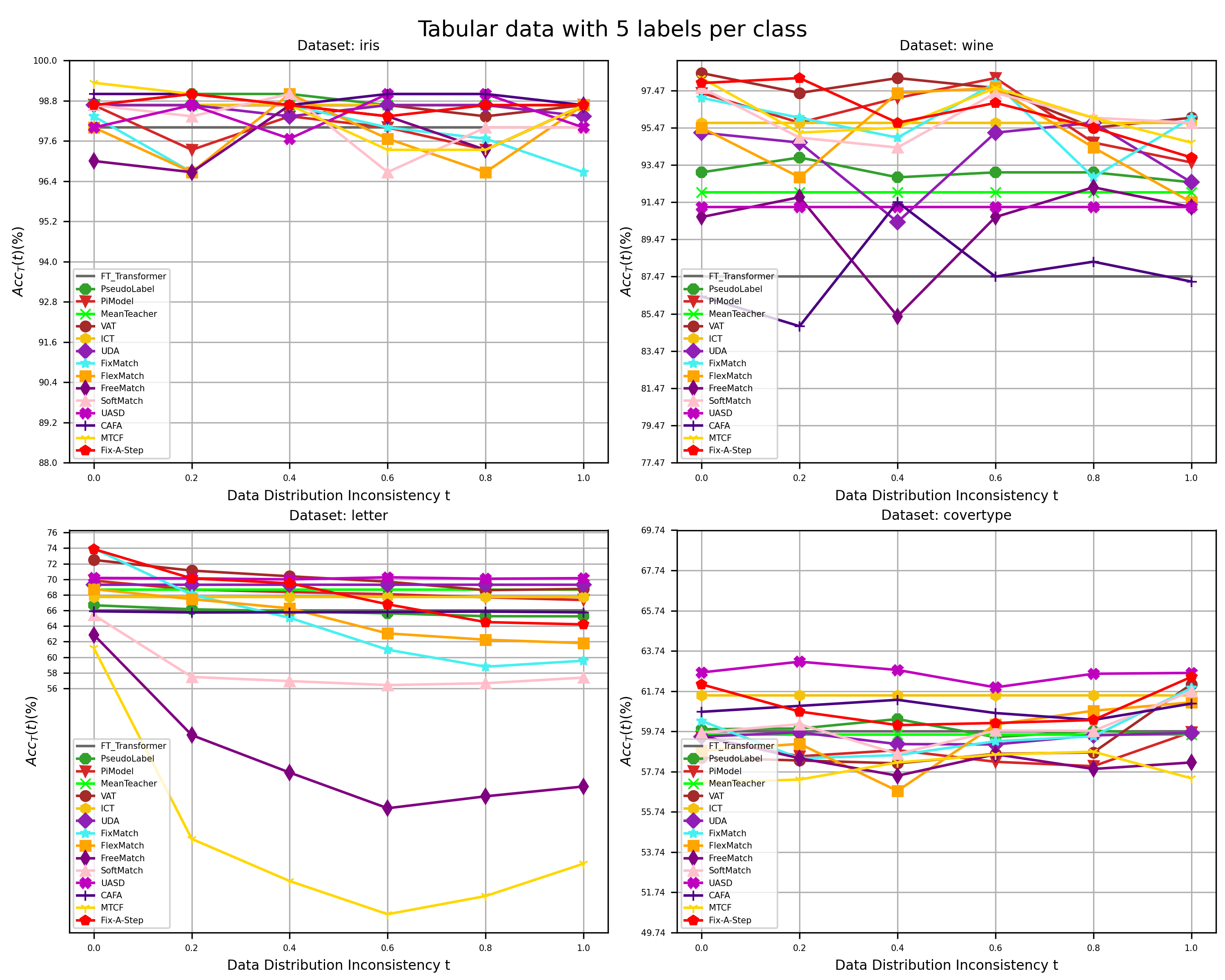

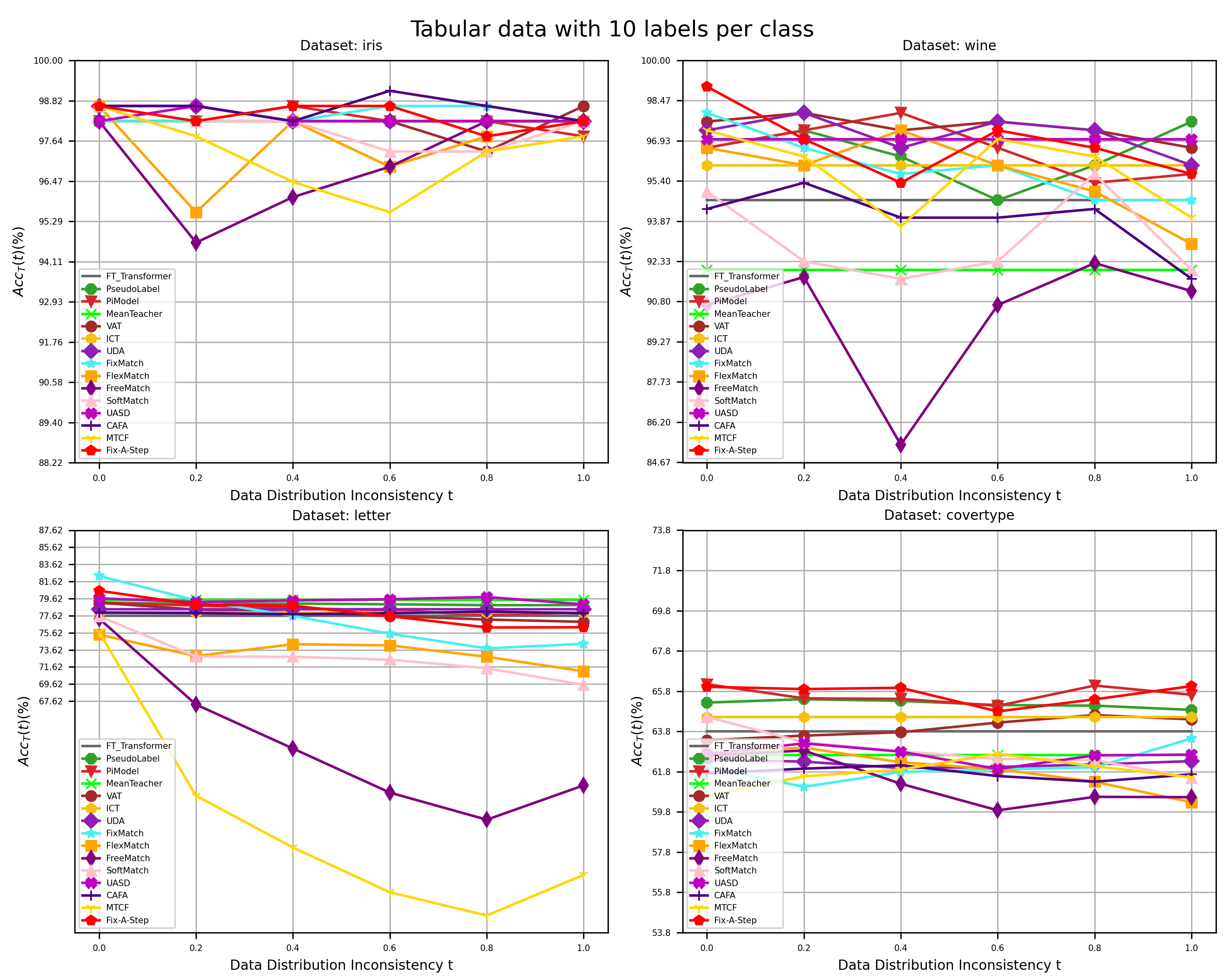

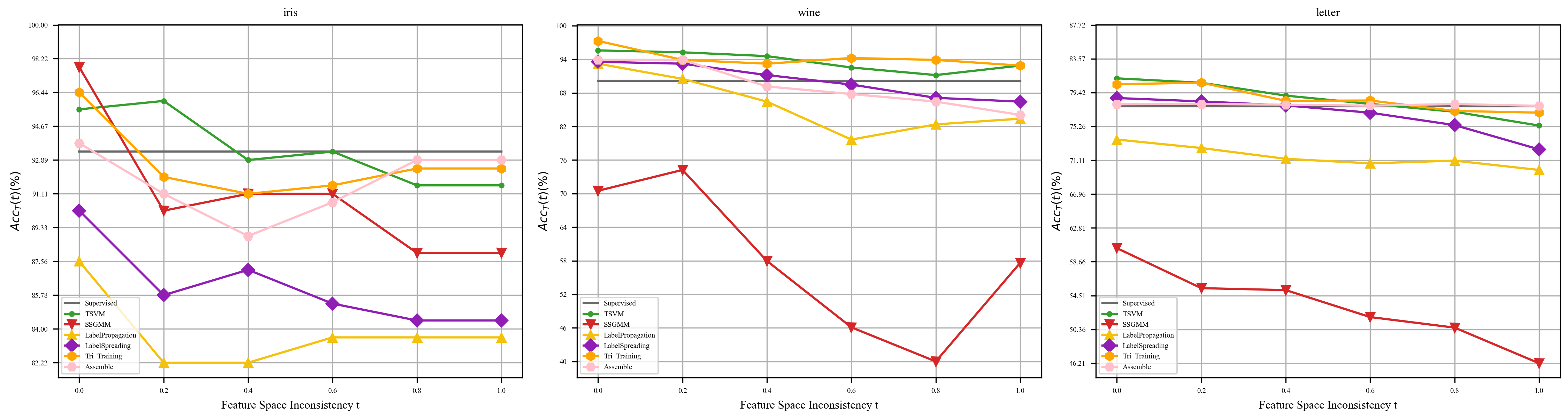

Studying the robustness of algorithms requires a dynamic perspective to investigate the change in algorithm performance with varying degrees of data inconsistency. we denote the degree of inconsistency between labeled and unlabeled data as t and describe robustness as the overall adaptability of an algorithm or model to all degrees of inconsistency t. We denote the function that describes the change in model accuracy with inconsistency as $Acc_T$. We plot the function $Acc_T(t)$ and refer to it as the Robustness Analysis Curve (RAC), which is used to analyze the robustness of performance with respect to the changes in the degree of inconsistency t. The RAC represents the correspondence between the inconsistency $t$ on the horizontal axis and the corresponding $Acc_T(t)$ on the vertical axis. For example:

Algorithms Used for Evaluation

The used algorithms are continuously updating.Statistical Semi-Supervised Learning Algorithms

- Semi-Supervised Gaussian Mixture Model (SSGMM)

- TSVM

- Label Propagation

- Label Spreading

- Tri-Training

- Assemble

Deep Semi-Supervised Learning Algorithms

- Pseudo Label

- PiModel

- MeanTeacher

- VAT

- ICT

- UDA

- FixMatch

- FlexMatch

- FreeMatch

- SoftMatch

- UASD

- CAFA

- MTCF

- Fix-A-Step

Baseline Model

- For statistical learning with tabular data: XGBoost

- For deep learning with tabular data: FT-Transformer

- For deep learning with Image data: ResNet50

- For deep learning with Text data: Roberta